GIFT-KASTL

A graph foundation model for scientific fracture networks, combining transformer architectures with structured nonlinear refinement.

View Project →AI Researcher with a background in Pure and Applied Mathematics.

I work on mechanistic interpretability, sparse and efficient learning, neural operators, graph foundation models, kernel methods, and mathematically grounded AI systems for scientific and structured domains.

I’m interested in understanding model reasoning, extracting circuits, and building efficient learning systems grounded in mathematics.

Selected systems and research contributions at the intersection of machine learning, mathematics, and scientific modeling.

A graph foundation model for scientific fracture networks, combining transformer architectures with structured nonlinear refinement.

View Project →Ongoing mathematical and conceptual work across scientific machine learning, operator learning, and AI.

Why AI systems succeed differently in empirical science and formal mathematics, and what this reveals about learning and proof.

Read Essay →A kernel-based framework for sparse reconstruction and operator-theoretic analysis of dynamical systems.

View Project →My work sits at the intersection of mathematical structure, scientific machine learning, and modern AI systems.

Operator learning, kernel methods, graph-based representations, and data-driven modeling of dynamical systems, with an emphasis on mathematically grounded structure.

Mechanistic interpretability, sparse and efficient neural computation, and research-grade experimentation for modern learning systems.

Selected research posters shown in chronological order, highlighting work in scientific machine learning, mathematical AI, and graph-based learning for structured systems.

A research poster presented at the SIAM Conference on Mathematics of Data Science, centered on scientific machine learning, sparse learning, neural operators, and mathematically grounded approaches to structured scientific data.

This poster reflects a broader research direction in scientific AI, efficient learning, and mathematical structure in modern machine learning.

A research poster on graph foundation models for scientific machine learning, exploring how fracture networks and structured physical systems can be modeled using graph-based learning architectures.

This project sits at the intersection of graph AI, scientific machine learning, and foundation-model thinking for structured scientific data.

Selected papers, preprints, and public research artifacts spanning kernel methods, operator learning, and scientific machine learning.

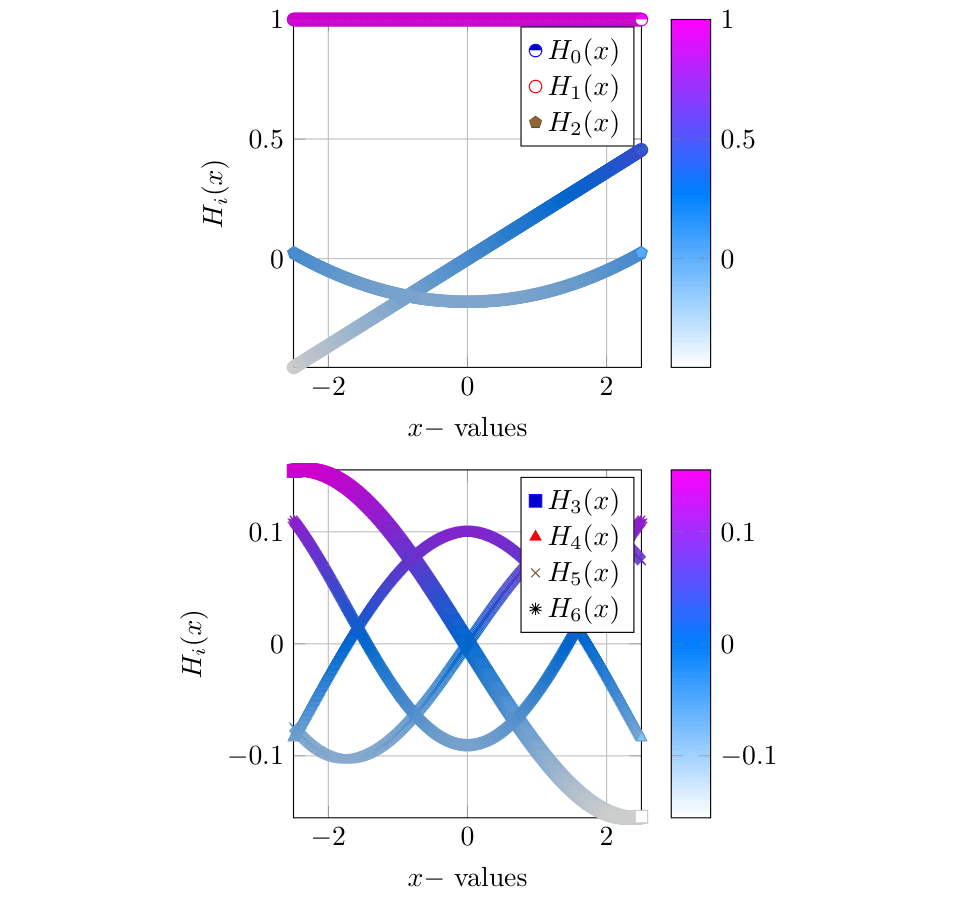

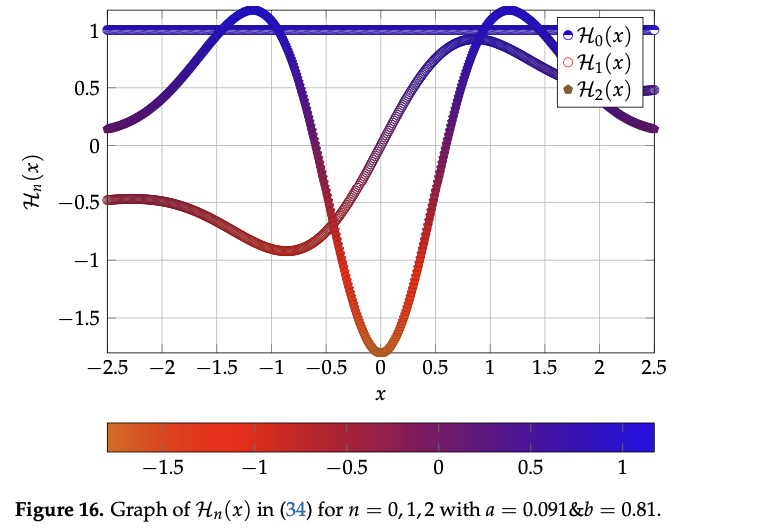

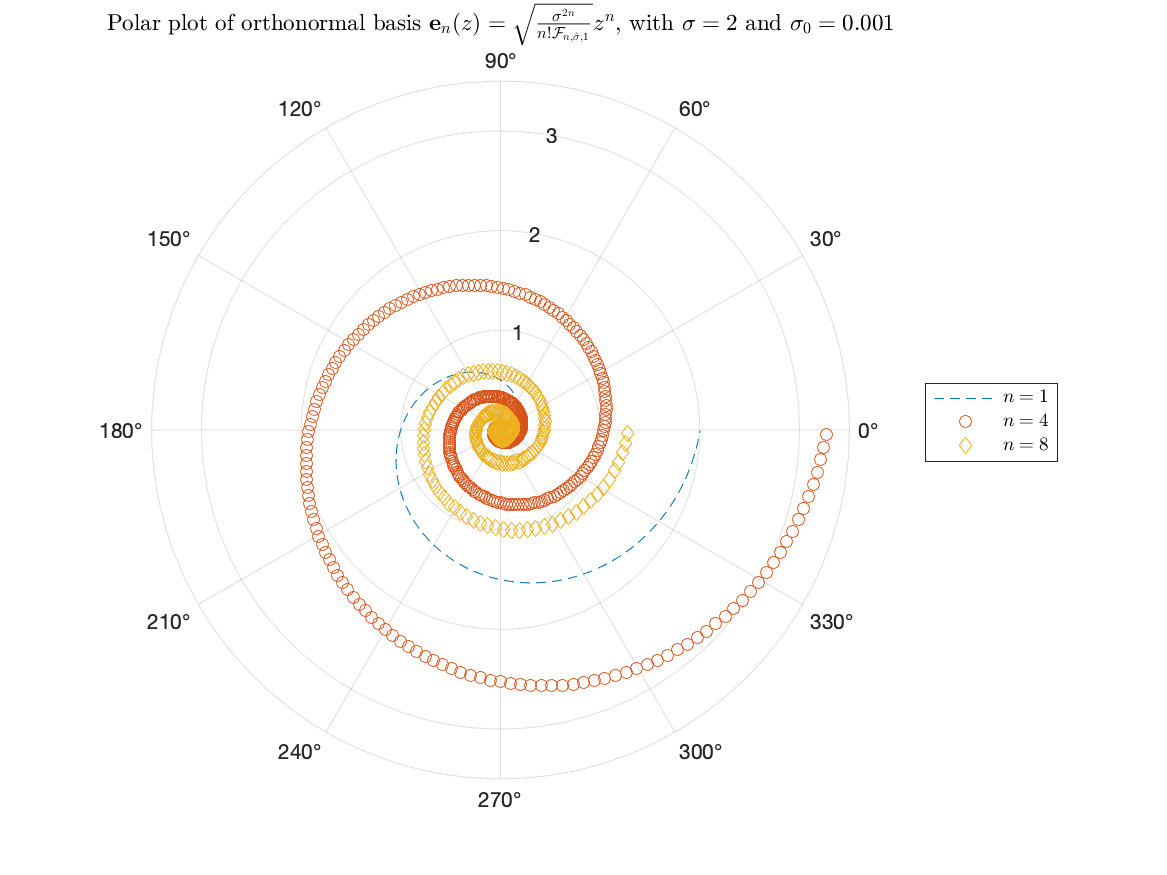

A feature paper on the Generalized Gaussian Radial Basis Function (GGRBF), connecting reproducing kernel Hilbert space theory, orthonormal bases, and machine learning applications.

This work develops the Generalized Gaussian Radial Basis Function (GGRBF), studies its reproducing kernel Hilbert space, and connects it to mathematical structures such as orthonormal bases and Hermite-type eigen-observables.

The project bridges kernel methods, RKHS theory, and machine learning applications, showing how a mathematically enriched radial basis framework can support both theoretical analysis and practical modeling.

Representative technical projects spanning interpretability, operator learning, sparse neural computation, and mathematical modeling for AI systems.

Research and engineering environments where theory, experimentation, and carefully built systems reinforce one another.

Research and engineering roles focused on interpretability, reasoning, efficient learning, and scientific AI—especially environments that value deep technical work, reproducibility, and mathematically grounded modeling.

High-trust, research-driven teams where I can iterate quickly: run experiments, write clean code, document results, and ship tools that enable others. I prioritize clarity, rigor, and measurable impact.

I aim for repositories that are understandable, runnable, and useful to other researchers.

Where applicable, repositories include requirements.txt, configuration files, fixed seeds, and result plots. If you encounter any issue reproducing results, please reach out.

Open to research collaborations, research engineering roles, and conversations around scientific machine learning, interpretability, and mathematically grounded AI.

I am particularly interested in collaborations involving scientific machine learning, operator learning, kernel methods, sparse representations, graph learning, and mechanistic interpretability.