import torch

import torch.nn as nn

class KASTLLayer(nn.Module):

"""

Kolmogorov-Arnold Superposition Theorem Layer

used as a structured refinement stage after the graph transformer.

"""

def __init__(self, scale: float = 1.0):

super().__init__()

self.scale = scale

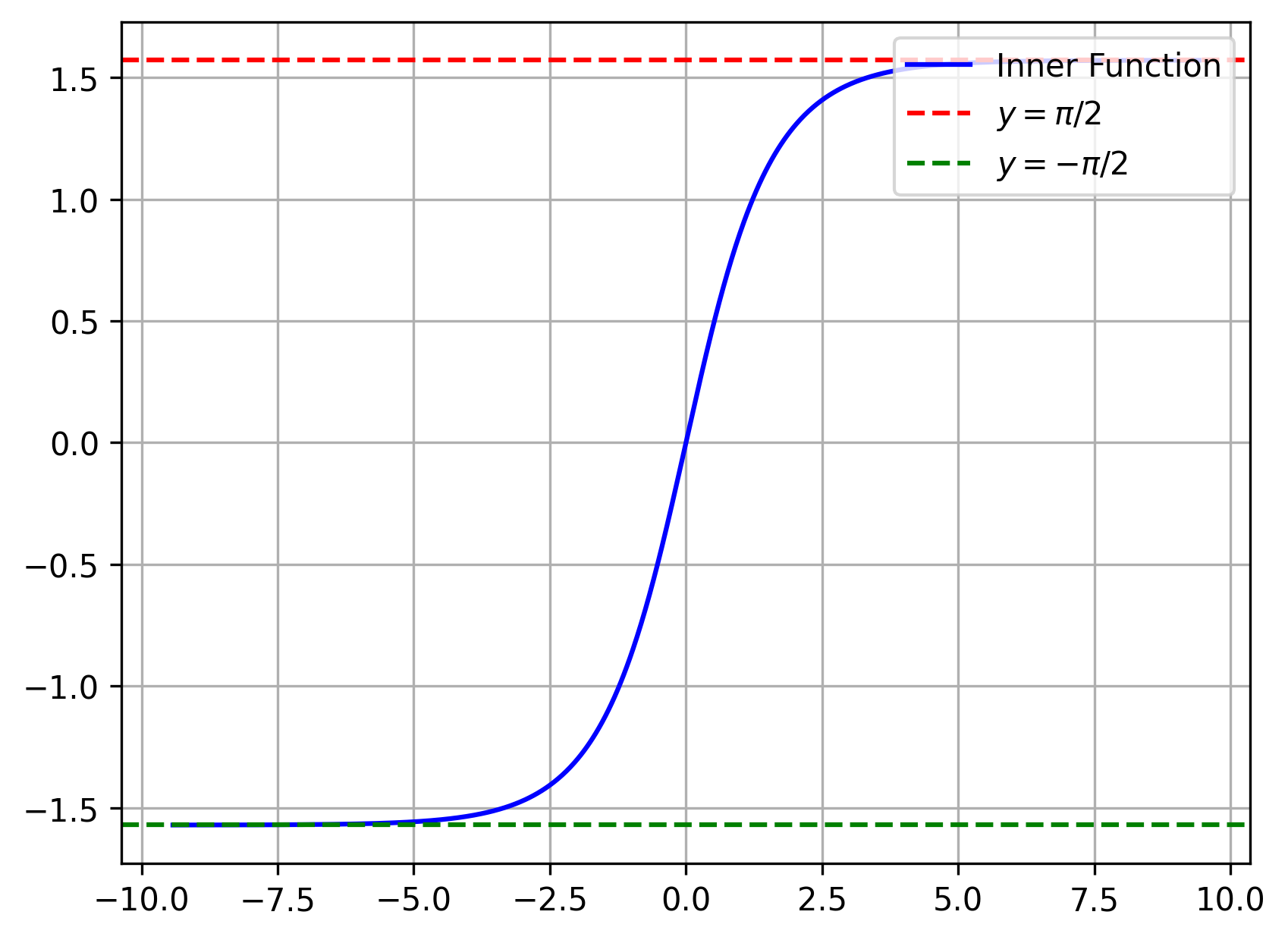

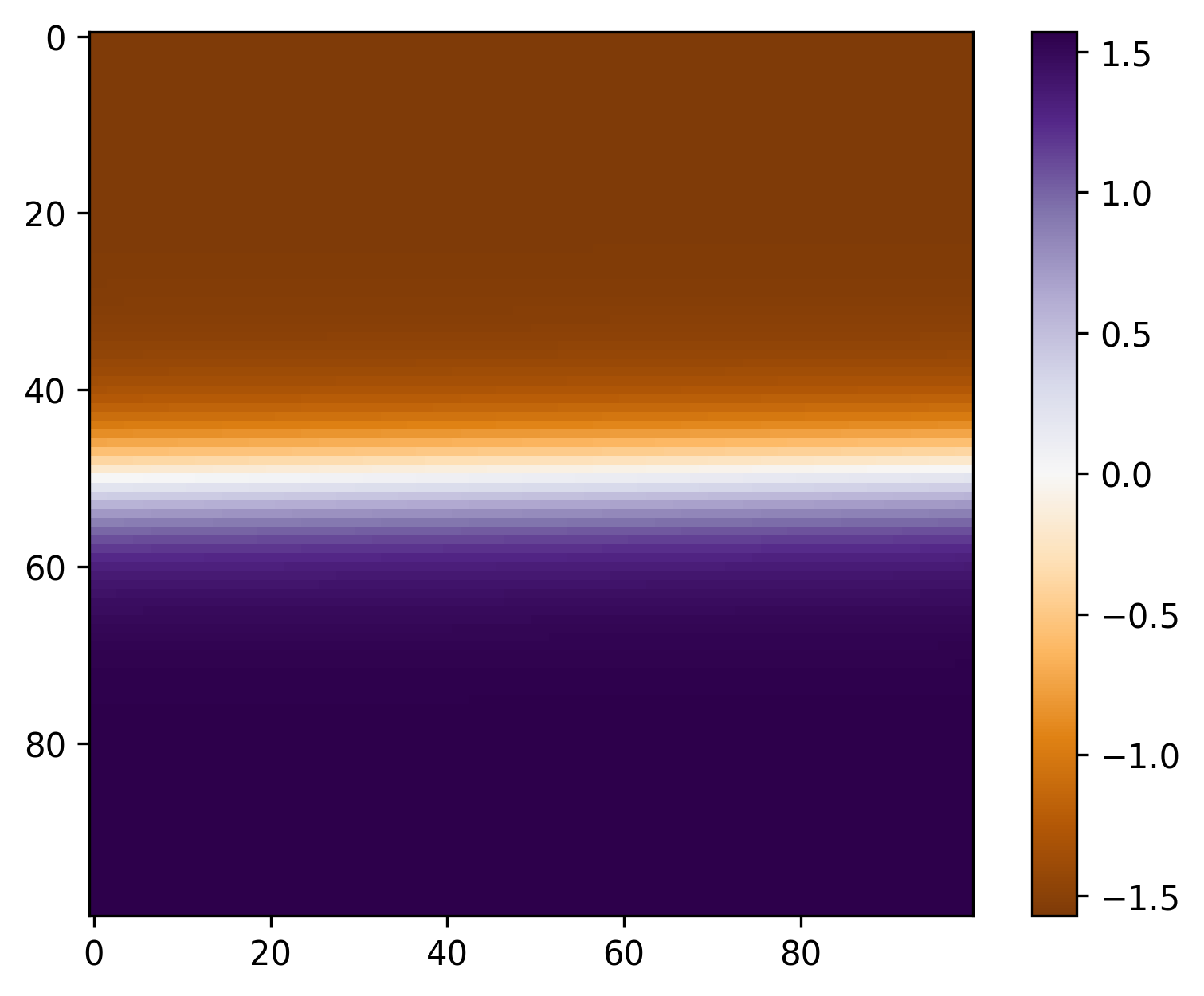

def inner_function(self, x: torch.Tensor) -> torch.Tensor:

return torch.atan(torch.sinh(x))

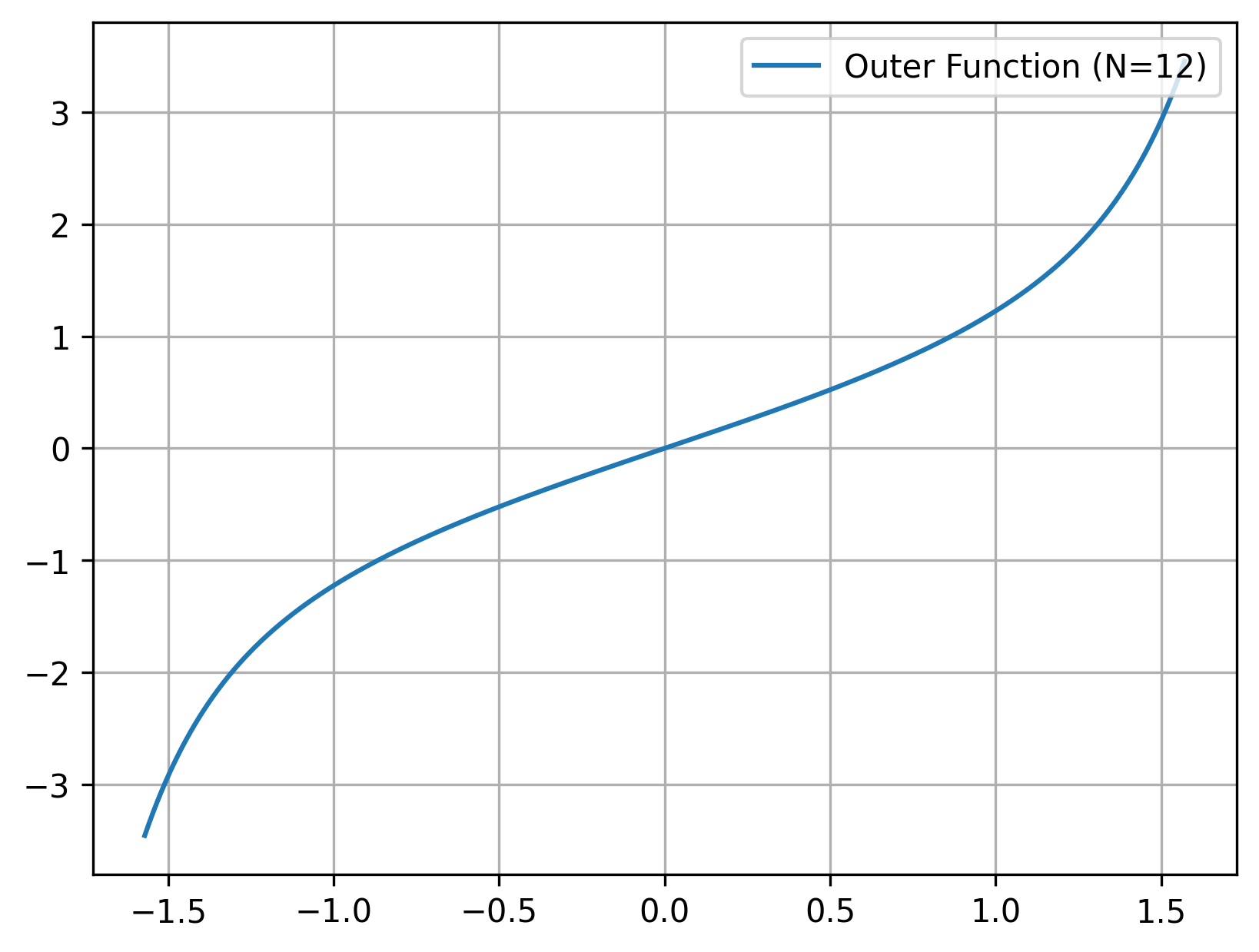

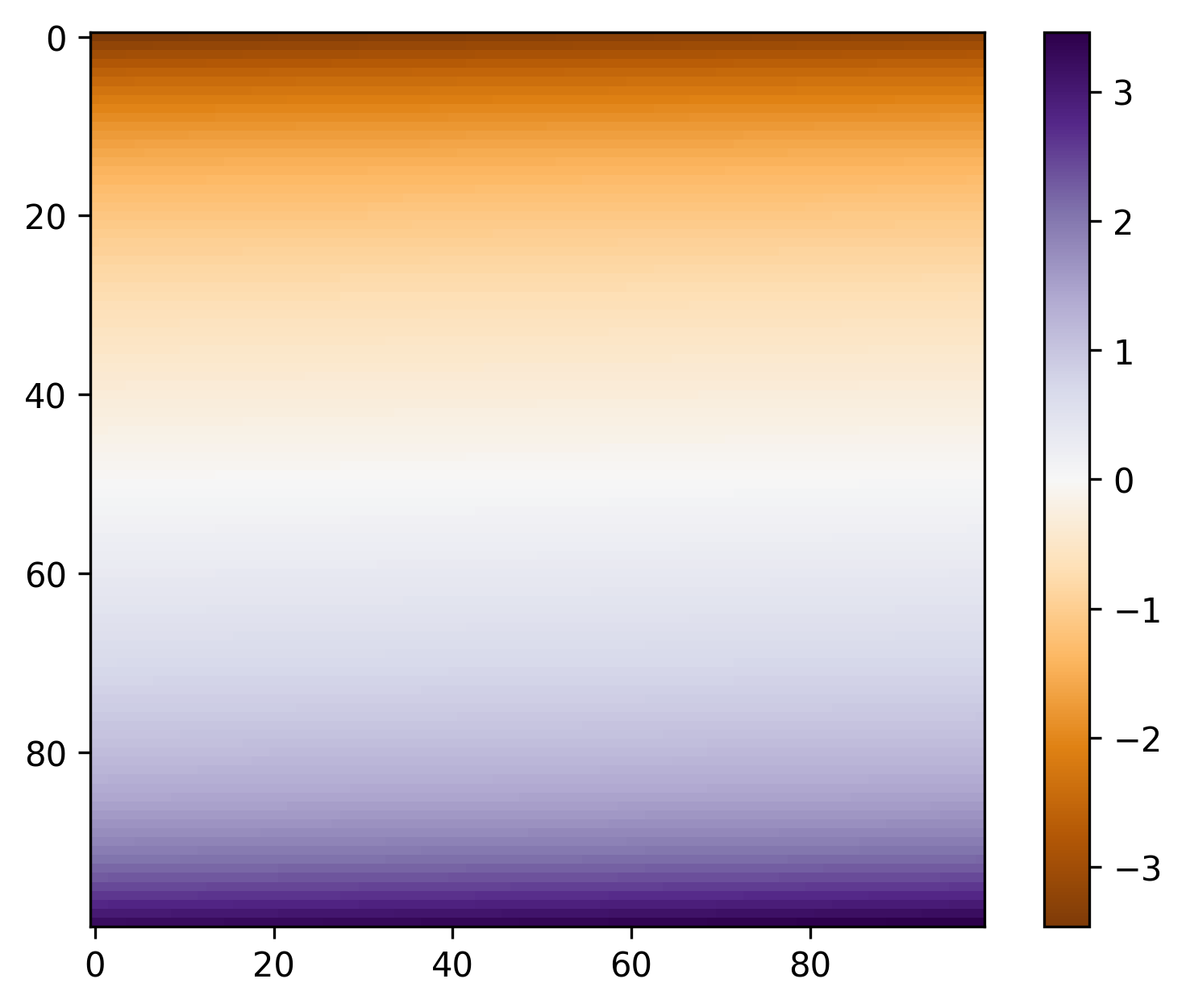

def outer_function(self, x: torch.Tensor) -> torch.Tensor:

# Truncated outer expansion inspired by the theorem-driven construction

return (

x

+ x**3 / 6

+ x**5 / 24

+ 61 * x**7 / 5040

+ 277 * x**9 / 72576

)

def forward(self, x: torch.Tensor) -> torch.Tensor:

z = self.inner_function(x)

y = self.outer_function(z)

return self.scale * y

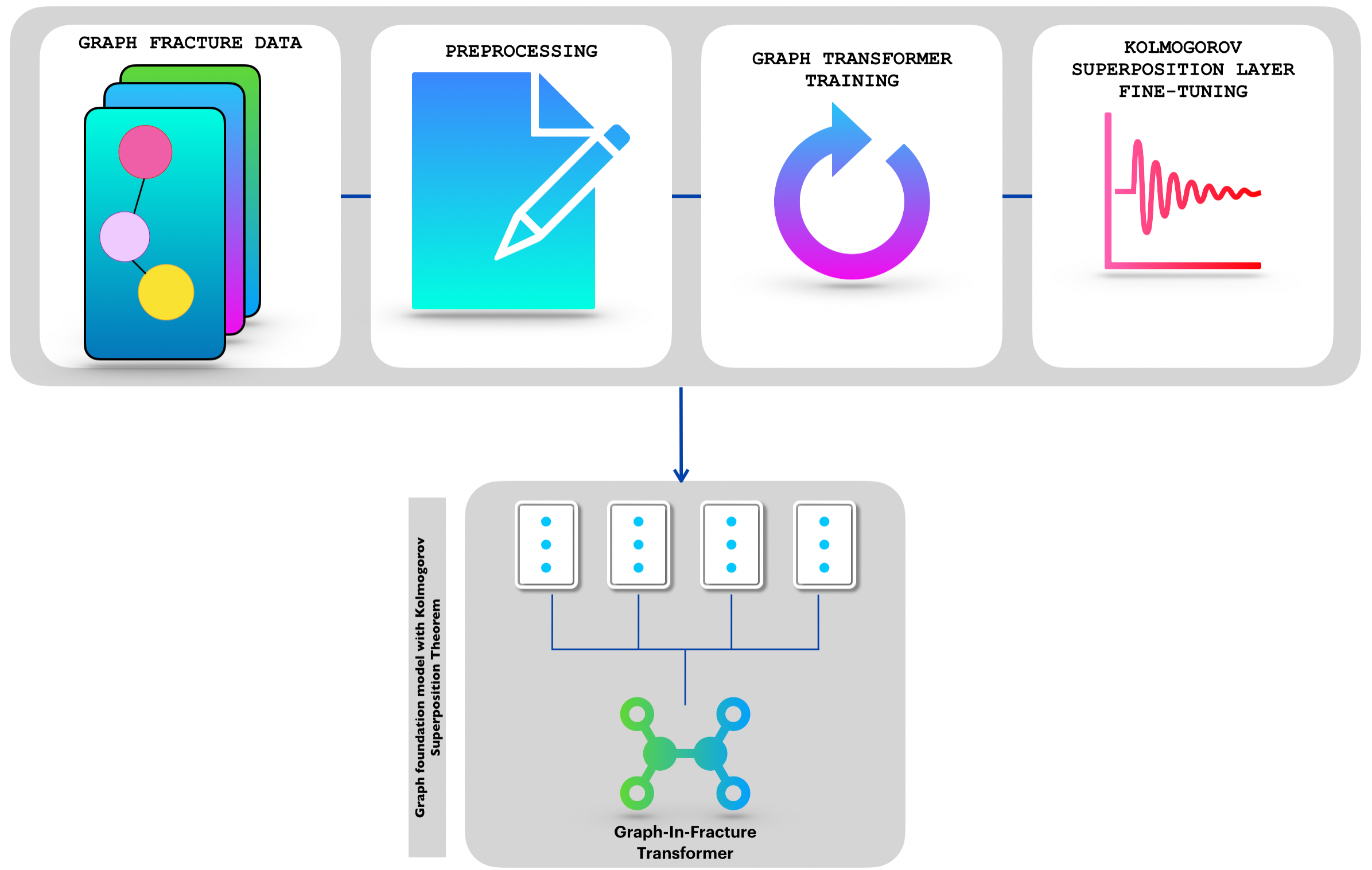

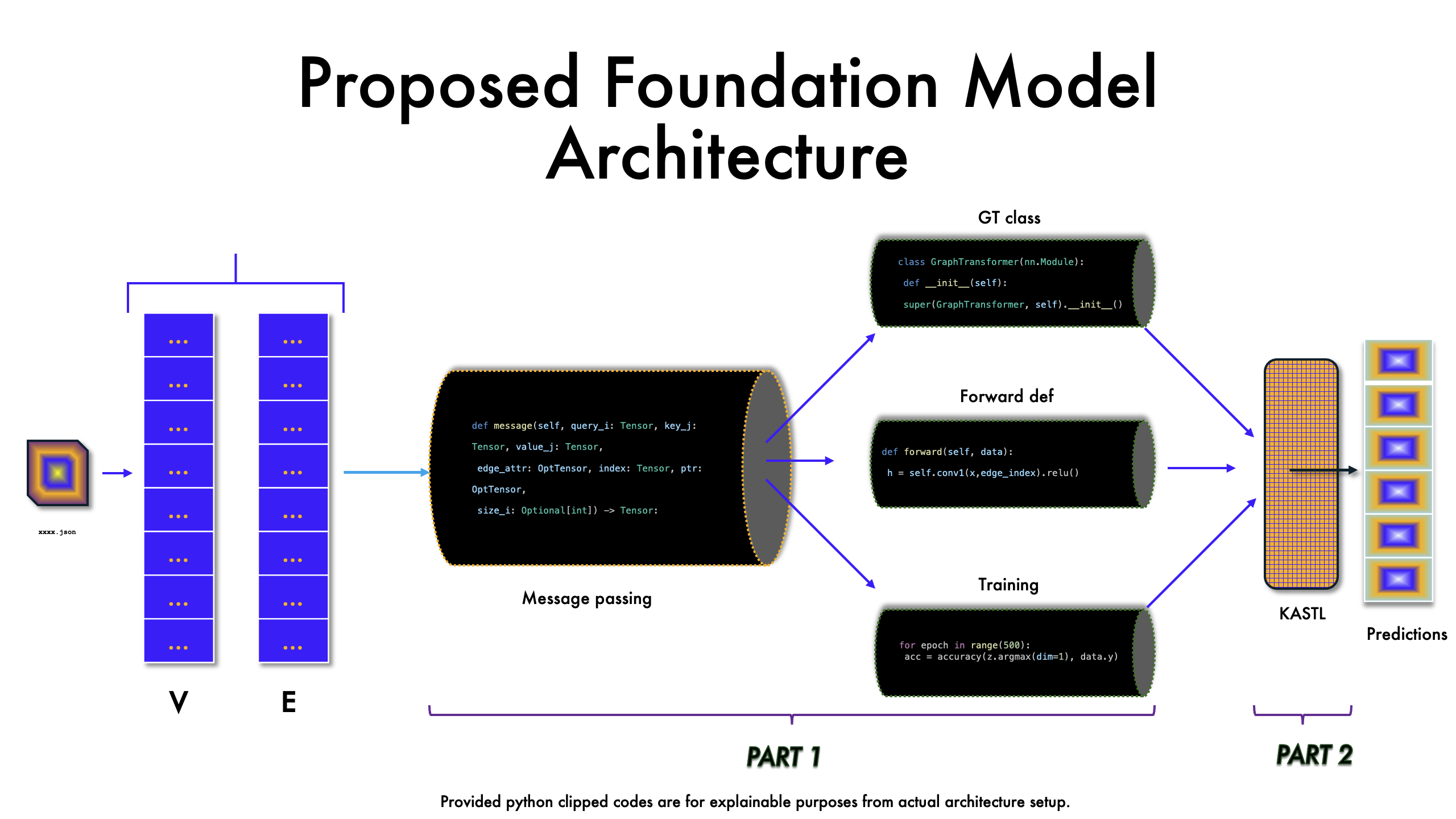

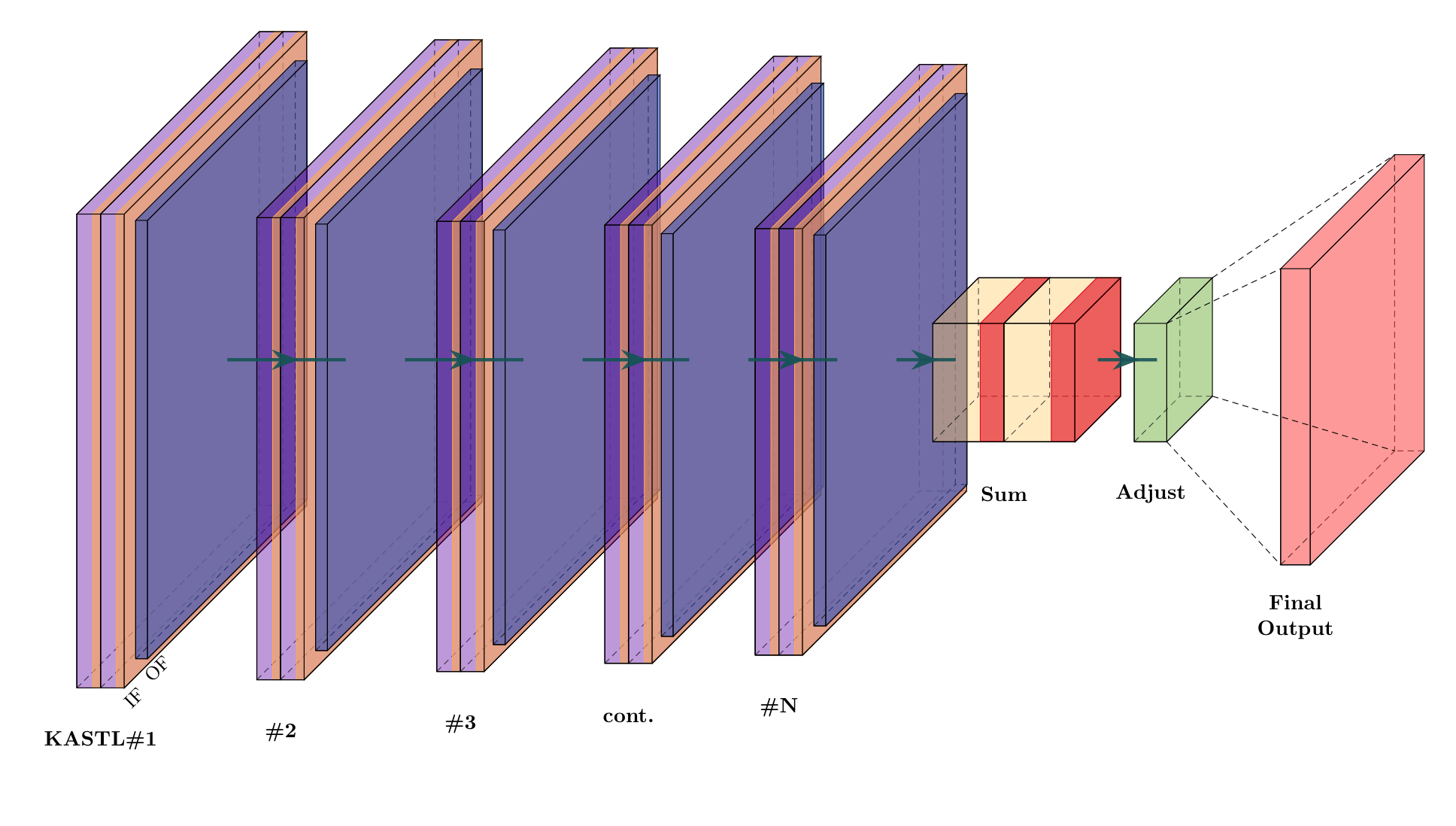

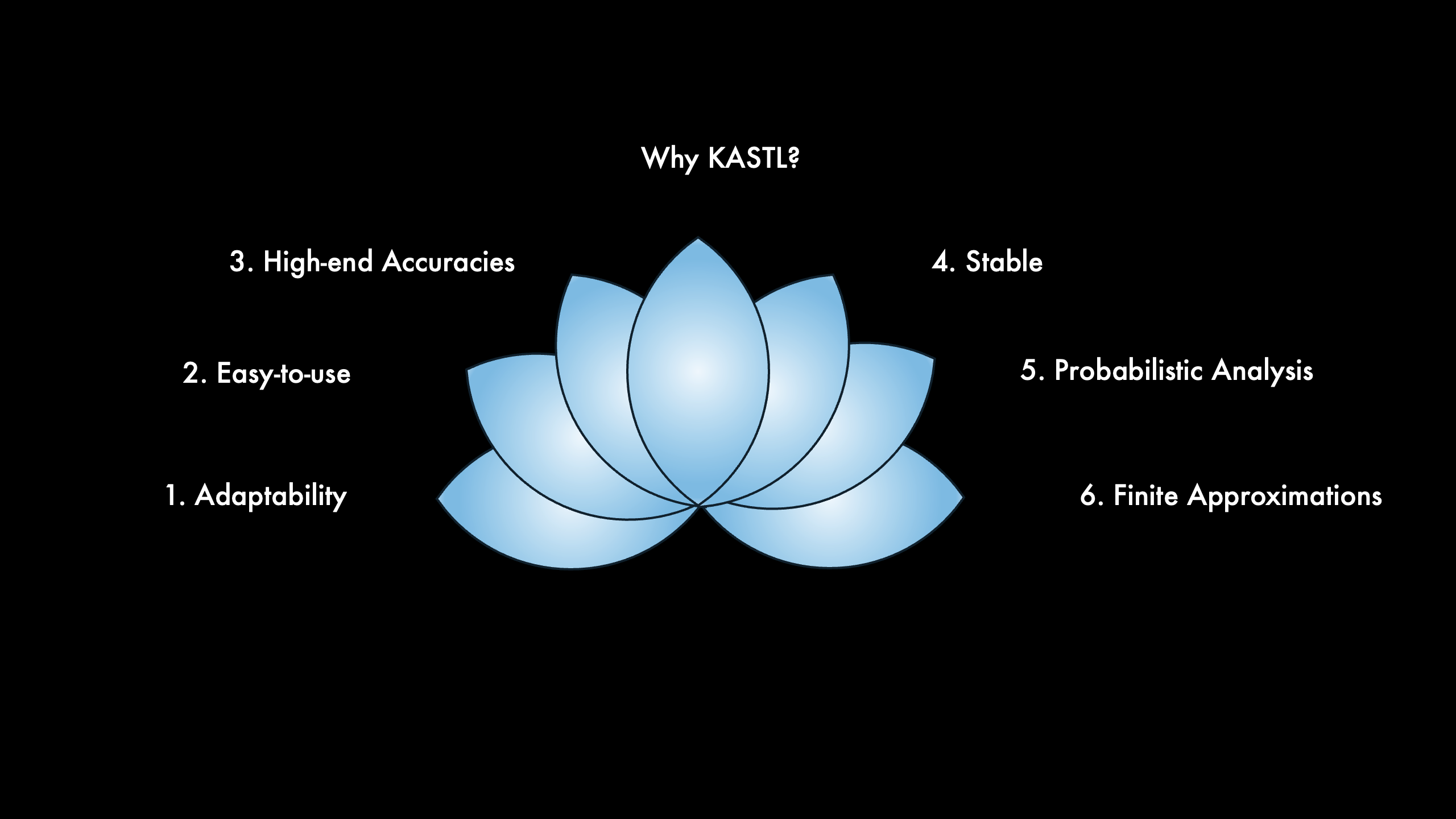

Poster and project assets

Use the poster as the deeper technical companion.

The poster provides a broader view of the system design, mathematical

formulation, and current experimental material for GIFT-KASTL. The project

page acts as the polished entry point; the poster can carry the denser

technical details until additional experiments and benchmarks are added here.